Impact Evaluators, Public Goods, and Growth-Driving Economies: TIG as a Case Study

Key Takeaways

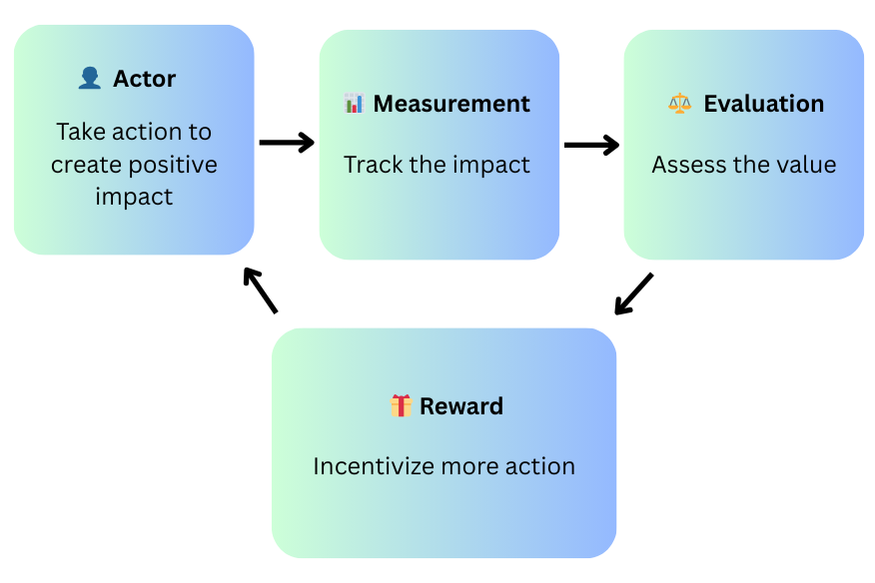

- •Impact Evaluators solve the public goods funding problem by creating a continuous action-measurement-evaluation-reward cycle that retrospectively and iteratively distributes rewards based on actual demonstrated impact rather than speculative promises or upfront grants.

- •TIG implements an Optimisable Proof of Work mechanism where miners (Benchmarkers) compete by solving computational challenges using algorithms submitted by Innovators, creating a synthetic market that naturally computes algorithmic efficiency through economic signals rather than arbitrary cryptographic puzzles.

- •The dual licensing model sustains TIG's flywheel by combining open data licenses (ensuring immediate public good creation) with commercial licenses (recycling revenue into the token economy), allowing real-world algorithmic adoption to generate spillover benefits while funding continued innovation.

Impact Evaluators, Public Goods, and Growth-Driving Economies: TIG as a Case Study

TL;DR

- Public goods are hard to fund because of the free-rider problem and tragedy of the commons.

- Impact Evaluators (IEs) fix this by rewarding contributions after measurable impact is proven, creating a positive feedback loop: action → evaluation → reward → more action.

- The Innovation Game (TIG) is an IE for algorithmic innovation: Innovators create new algorithms, Benchmarkers test them, adoption shows utility, and rewards flow proportionally to demonstrated impact.

- Dual licensing sustains the loop: open licenses create public goods, while commercial licenses recycle revenue into the token economy, sustaining the loop and driving real-world spillovers.

Public goods (PGs) are resources that benefit everyone, not just the people who create or pay for them. Think of things like open-source software, clean air, public infrastructure, or shared scientific knowledge.

But funding public goods is notoriously difficult. Why? Because of the free-rider problem: if people can benefit without contributing, many will simply… not contribute. This dynamic often leads to the tragedy of the commons: where rational individuals acting in their own self-interest collectively deplete or degrade a shared resource, making everyone worse off.

Traditional funding models, like grants, proposals, or speculative investment, have proven effective for established institutions and well-defined projects, as evidenced by successful programs like the ERC, or the NSF. However, these models often struggle with decentralized, rapidly-evolving public goods work due to their inherent characteristics: lengthy approval processes, bureaucratic overhead, and difficulty coordinating incentives across distributed contributors working on uncertain outcomes.

This is where Impact Evaluators (IEs) come in: a new model for value creation and public goods funding designed to kick off a positive feedback loop:

Create impact → Get rewarded → Stay incentivized → Create more impact.

IEs flip the script: instead of funding work before it happens (like a grant), they reward people after the impact is made, and they can do so in an iterative, automated loop. You perform an action, it gets evaluated for its value to the network or ecosystem, and you get rewarded. Repeat the process, and you keep earning.

Sounds good, but still abstract? Let’s make it real. Imagine a project that incentivizes participants to build better algorithms to solve tough optimization problems, like planning vehicle routes to reduce traffic and carbon emissions. Their work directly benefits the world: less fuel used, less CO₂ emitted. The better their algorithms, the more impact they create, and the more they get rewarded.

That’s an example of an Impact Evaluator in action, and it is what The Innovation Game (TIG) does. TIG applies the IE model to developing algorithms that solve high-impact problems across many domains valuable to society.

In this post, we’ll unpack what Impact Evaluators really are, why they’re a breakthrough for value creation and public goods funding, and how TIG brings this paradigm to life.

What are Impact Evaluators?

Impact Evaluators are mechanisms that distribute rewards retrospectively and iteratively, based on actual impact. Rather than relying on central decision-makers, IEs define a repeating system of:

- 📊 Measurement – Who did what?

- ⚖️ Evaluation – Determine value and deserved reward.

- 🎁 Reward – Distribute reward accordingly.

- 🔄 Repeat - Continue the cycle to sustain impact.

This continuous action → measurement → evaluation → reward cycle shapes incentives:

When participants can anticipate that impactful actions will reliably lead to rewards, it becomes more profitable to keep doing work that benefits the community.

Importantly, retroactive doesn’t mean the reward has to come long after the action, in some cases, the time window can be short enough that it feels almost immediate. Thus, IE aligns incentives around measurable progress, making it ideal for spinning a flywheel driving continous growth, innovation, and value creation.

This might sound familiar, and for good reason. There are already live examples of Impact Evaluators out in the wild. Here are a few:

-

Filecoin's & Optimism’s RetroPGF – Both programs apply an IE model to reward projects that have delivered measurable value to their ecosystems. The measured impact is the tangible contribution to network growth, resilience, or adoption. Measurement often relies on qualitative and contextual indicators rather than strictly quantitative metrics. Evaluation is conducted by a designated group (committee or badgeholder community), typically through structured voting processes on a curated set of eligible projects. Rewards are then distributed from a fixed pool to the projects deemed to have generated the highest net benefit. The presence of recurring funding rounds creates a sustained loop, reinforcing incentives for continued contribution.

-

Glow – A DePIN project pioneering a new model for solar subsidies. Competing solar farms are rewarded for the verified clean energy and carbon impact they produce (impact). Measurement and evaluation are enabled through a combination of AI models, IoT sensors, satellite imagery, and on-site audits, ensuring accuracy without relying on self-reporting. Rewards are allocated in recurring rounds, so the higher the net carbon reduction in each period, the greater the payout. This drives a positive feedback loop where farms seek higher efficiency, greater environmental benefit, and more rewards over time.

-

Filecoin - Filecoin is a proof-of-useful-work Impact Evaluator where the impact is providing verifiable, decentralized data storage. Measurement happens through cryptographic proofs: Proof-of-Replication and Proof-of-Spacetime. Evaluation is the on-chain verification of these proofs, ensuring that storage is both authentic and persistent. Rewards in FIL are distributed proportionally to the amount of storage contributed. Filecoin creates a self-reinforcing flywheel: as more storage is added and more clients use the network, the economic value of providing high-quality storage grows, attracting more providers and strengthening the network’s utility and resilience.

-

Bitcoin – Yes, even Bitcoin can be seen as an Impact Evaluator. The impact is securing the network through proof-of-work. Measurement is the hash rate contributed per miner over each ~10-minute block interval. Evaluation is a computational assessment of each miner’s probability of contributing to the next valid block, based on their share of the total hash rate. Block subsidies plus transaction fees are the rewards and are distributed at every block according to a fixed issuance schedule. While the reward pool appears zero-sum in the short term, the network’s growth and adoption have historically increased the real-world value of those rewards, creating a strong flywheel effect.

Quick side note: if you enjoy game theory, you can think of IEs as a real-world implementation of the Public Goods Game with institutional rewards built in.

The Innovation Game

The Innovation Game (TIG) applies the Impact Evaluator (IE) framework to algorithmic innovation. It introduces a novel proof-of-work mechanism called Optimisable Proof of Work (OPoW), where participants compete for block reward in TIG tokens, not by solving arbitrary cryptographic puzzles, but by submitting and employing algorithms for solving computational challenges designed to drive algorithmic innovation. This establishes a synthetic market for algorithms, with miners (called Benchmarkers in TIG) incentivized to identify and adopt the most efficient ones. Unlike traditional proof-of-work systems, OPoW allows the underlying algorithms to be optimized while maintaining network decentralization and security. The synthetic market establishes artificial demand for algorithmic optimization. This demand functions as a necessary signal of adoption and as the basis for assessing the rewards granted to the Innovators who create the algorithms. IP licensing captures value to sustain the broader token economy.

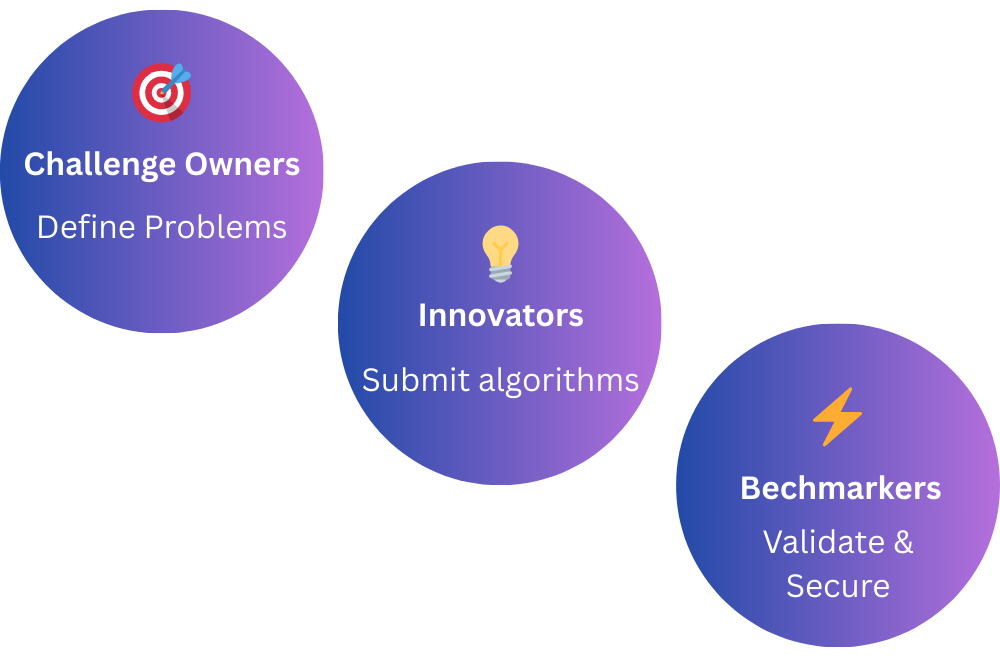

TIG defines three core participant roles:

-

Challenge Owners: They define high-impact algorithmic challenges, such as problems in AI, cryptography, or logistics. Challenge Owners receive a portion of TIG emissions proportional to the recent improvements delivered by Innovators on their challenges.

-

Innovators: These are algorithm developers who submit solutions to active challenges. Their rewards are based on how often their algorithms are used by Benchmarkers to successfully solve problem instances, incentivizing performance, not just participation. Innovators drive the development of novel methods with measurable utility.

-

Benchmarkers: Like miners in Bitcoin, but rather than mining through arbitrary hash functions, Benchmarkers compete to solve computational challenges using algorithms submitted by Innovators. Their profit-driven choices create market signals about algorithmic efficiency, factoring in real economic costs like hardware requirements and computational complexity.

TIG creates a synthetic market for algorithms, where performance determines value and incentives are tied directly to measurable impact. You can think of this market as an analog computer: its economic dynamics naturally ‘compute’ which algorithms are most efficient, producing signals that guide the allocation of rewards. By removing gatekeepers and peer review bottlenecks, TIG fosters a meritocratic ecosystem where algorithmic innovation is discoverable, trackable, and economically rewarded.

TIG as an Impact Evaluator

TIG exemplifies the principles of Impact Evaluators (IEs) in action. The impact evaluated is very specific: the economic efficiency of an algorithm within TIG's synthetic economy, which serves as a proxy for potential future real-world value.

At its core, TIG retroactively rewards participants based on the synthetic market-defined utility and performance of their algorithmic contributions. Unlike traditional grant-making or speculative funding models, TIG doesn’t reward based on promises: it allocates rewards based on measurable impact.

Innovators submit algorithms, not to fulfill a proposal, but to solve open scientific and technical challenges. Benchmarkers, acting as decentralized evaluators, compete to identify which algorithms perform best. Their computational effort is not wasted on arbitrary cryptographic puzzles; instead, it is directed toward testing and validating innovation. Ongoing adoption of algorithms by Benchmarkers reflects their market recognition within TIG’s synthetic economy, serving as a proxy for prospective real-world impact. Because Innovators’ rewards are tied directly to the degree of adoption their algorithms achieve, value consistently flows toward solutions that demonstrate sustained utility, reinforcing incentives for lasting impact.

This cycle of submission → evaluation → reward → continued adoption repeats over time, reinforcing incentives for participants to keep improving algorithms and for the ecosystem to keep identifying and adopting the best solutions. The process simultaneously selects winners and sets objective performance baselines, creating a market that transparently prices algorithmic value.

TIG’s reward mechanism aligns closely with IE logic:

- Measurement happens through benchmark competition results.

- Evaluation is driven by the success and efficiency of algorithms over time.

- Reward is distributed via token emissions, allocated in proportion to the demonstrated impact.

Licensing closes the incentive loop. Indeed, TIG combines two complementary pathways for algorithmic IP:

- Commercial License – Organizations can purchase rights to deploy cutting-edge algorithms in their products. This revenue doesn’t just monetize innovation; it flows back into the TIG economy, strengthening token value and keeping rewards attractive for participants. As companies adopt more efficient algorithms, the spillover effects (better services, lower costs, reduced energy use, etc.) benefit society at large. Importantly, each commercial adoption recycles capital into the system, providing the funding that sustains the open-innovation flywheel.

- Open Data License – At the same time, TIG ensures that the algorithms remain available as public goods. By making the code and data freely usable (with the requirement to share improvements), TIG fosters open collaboration, transparency, and rapid scientific progress.

Together, these two channels sustain the flywheel: open licensing guarantees immediate public-good creation, while commercial licensing provides the revenue stream that funds and reinforces it.

By turning global computational labor into a collective search for better tools, TIG aligns individual incentives with collective progress.

Conclusions

TIG is an Impact Evaluator for algorithmic innovation. Challenge owners define computational challenges with high potential for real-world impact, spanning domains like AI, cryptography, and logistics. Innovators submit algorithms for solving such challenges, and Benchmarkers competitively measure algorithm performance on objective problem instances. Algorithm adoption by Benchmarkers signals economic efficiency within TIG’s synthetic economy, serving as a proxy for the algorithms' potential future real-world value. Rewards are distributed in proportion to the demonstrated performance. In this way, TIG turns measurable impact into an economic primitive, channeling computation toward socially valuable algorithmic improvements.

Thus, when rewards follow and incentivize impact, decentralized systems become flywheels for public-good creation, overcoming the tragedy of the commons.

Discuss This Article With AI

Get instant analysis and insights from leading AI assistants

How We Can Help

Interested in similar solutions for your project? Explore our related services: